MoRE Developer

At first, a description of how to launch the MoRE Developer is given. Then the design of the MoRE Developer user interface is described. The procedure for embedding an alternative spatial basis for modeling, different input data and modeling approaches and for generating results is explained. Furthermore, a short overview of different working tools in MoRE is given.

Contents

- 1 Launch MoRE

- 2 Reading and writing mode

- 3 Design of the MoRE Developer GUI

- 4 Implementaion of input data and modeling approaches

- 4.1 Implementing a spatial basis for modeling

- 4.2 Create new data sets

- 4.3 Implement new input data in MoRE

- 4.4 Implementation of new modeling approaches

- 5 Generate and export results

- 6 Modeling with variants in MoRE

- 7 Representation of measures in emission modeling

- 8 Aggregation of emissions on the basis of planning units

- 9 Groundwater transfer

- 10 Validation of results with observed river loads

- 11 Documentation module

- 12 Tools of MoRE Developer

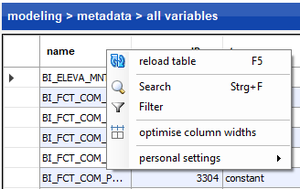

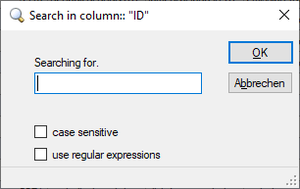

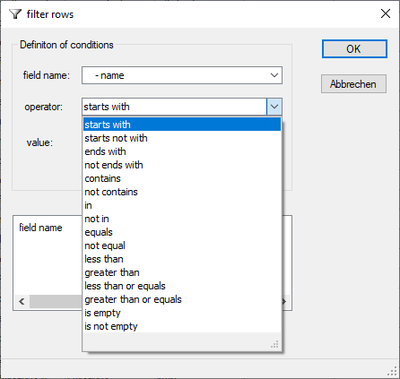

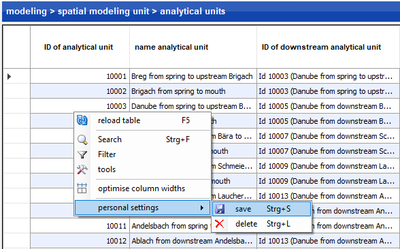

- 12.1 Finding and filtering data records

- 12.2 Change data records

- 12.3 Delete data records

- 12.4 Export of data records

- 12.5 Statistical analysis of input data

- 12.6 Statistical analysis of final results

- 12.7 Transparency and traceability

- 12.8 Attachment of files (flow charts)

- 12.9 Customizing the user interface

- 13 References

Launch MoRE

For launching the multi-user version of MoRE, select the respective shortcut (“MoRE_UBA_EN”) in the folder “UBA” or in the folder of a different project. Confirm the execution, if necessary.

For launching the single-user version (SQLite version), select the file “MoRE.exe” in the respective folder.

Reading and writing mode

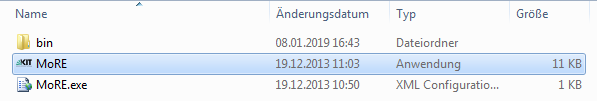

MoRE starts automatically in reading mode. In this mode, no adjustments can be made except for the interface configuration. To add, edit or delete variables, input data or other items, it is necessary to activate the writing mode. The currently active mode is visible in the lowest bar of the GUI. The mode can be changed from reading to writing (or vice versa) by clicking on the green or yellow button, respectively.

Design of the MoRE Developer GUI

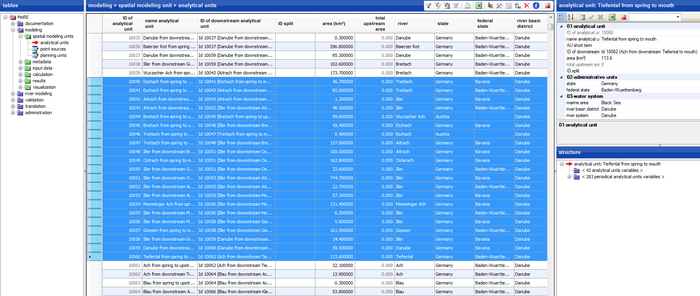

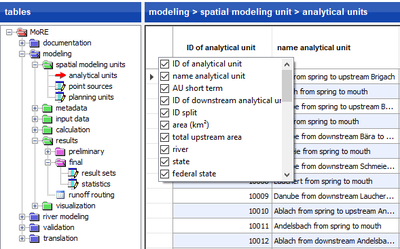

The MoRE Developer GUI consists of the following components:

- Overview of all object tables (left window)

- A data grid (middle window) in which records from a selected object table are displayed

- an attribute window (upper right window) and

- a structure window (lower right window), which both show additional information about a selected record in the data grid.

Further, MoRE features two toolbars with different functionalities which appear in the title bar of the data grid as well as in the title bar of the attribute window. All mentioned components are explained in detail in the following sections.

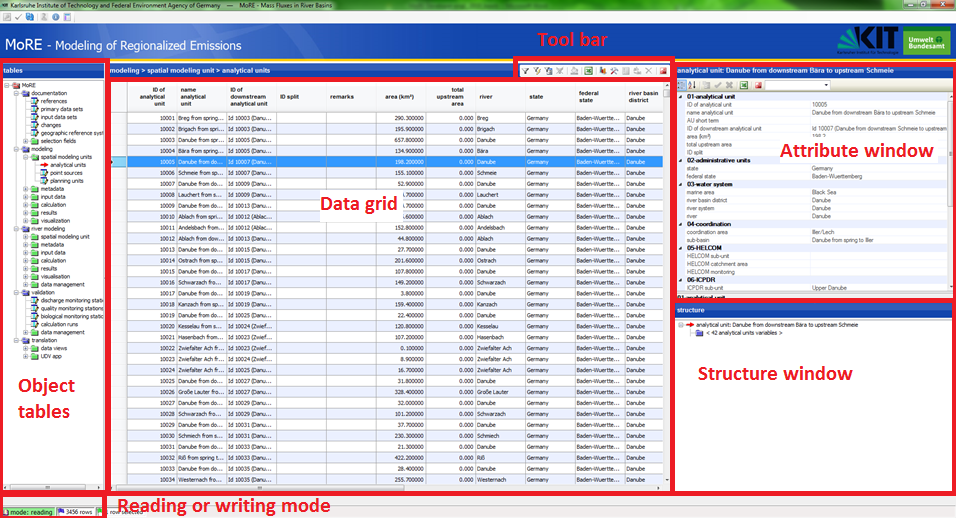

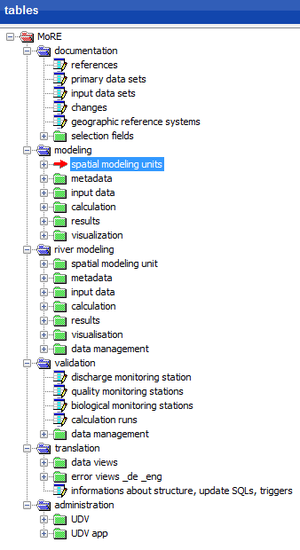

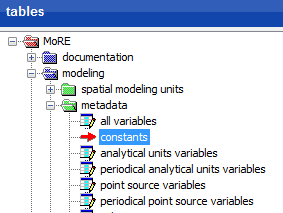

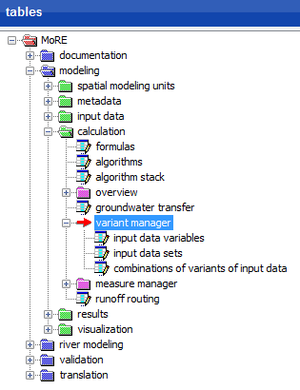

Object tables

With the MoRE Developer GUI, data from the PostgreSQL-database can be accessed. These data are listed in object tables and summarized in the folders documentation, modeling, validation as well as translation and data management (only visible as administrator). Object tables can be selected by clicking on the name of the desired object table. A red arrow appears in front of the name of the selected object table and the name is highlighted with blue color. Only one object table can be selected at once.

Object table documentation

In the object table documentation, the metadata of the primary data and the input data created from them is managed.

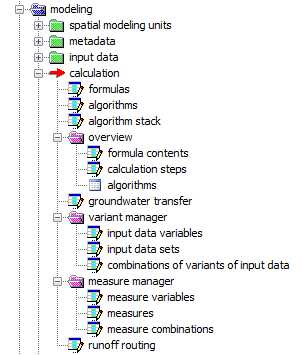

Object table modeling

The object tables modeling contain, first of all, the spatial modeling units as well as the metadata of the variables which are assigned to input data. Additionally, input data values of the variables can be recalled here. In this module all calculation approaches are defined and the created results are shown. Finally, adjustments for the visualization of data in the MoRE Visualizer can be made here. All subunits are explained in detail in the following sections.

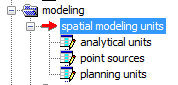

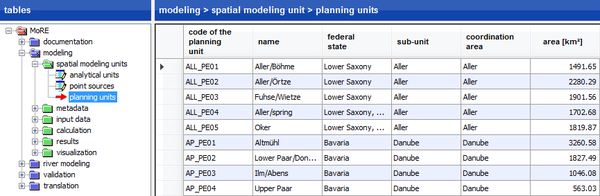

Spatial modeling units

The spatial modeling units are the basis for the modeling. At present, analytical units, point sources and planning units are implemented as spatial modeling units.

Analytical units are the primary spatial modeling units, for which eventually all calculations can be made. They are hydrological sub-catchments or e.g. water bodies. In order to model on the level of analytical units, all spatially distributed basic data have to be preprocessed in GIS to mean values or sums. This is especially the case for data which are related to emission pathways with diffuse emission patterns e.g. deposition rates of nitrogen or heavy metals.

Pathways which lead to selective emissions can be modeled separately as emissions from point sources. One purpose of this is to avoid inaccuracies that may occur when point sources are first aggregated on the area of analytical units. The detailed acquisition of point sources allows the implementation of purposive measures, e.g. for municipal waste water treatment plants under consideration of their size and processing steps. Besides, improved modeling approaches can be easily included especially for those substances and substance groups which emissions correlate well to point source-specific parameters. Municipal waste water treatment plants, industrial direct dischargers and abandoned mining sites are used for modeling at the moment. Other point sources can be added to MoRE with low effort (Section 3.4.1.2).

In Germany, spatial planning units play a major role as a spatial reference of the Water Framework Directive (WFD). They are larger spatial units which are used for the WFD management plants. They are implemented in MoRE and an algorithm was developed, which aggregates the emissions of analytical units to planning unit emissions.

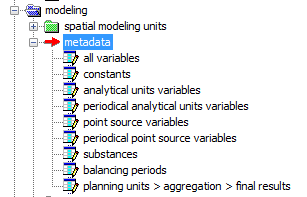

Metadata

The metadata object table contains information about variables, visualization and literature references. It also contains a change history of the model and the management of preset selection boxes.

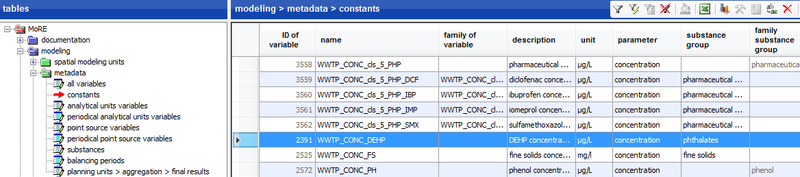

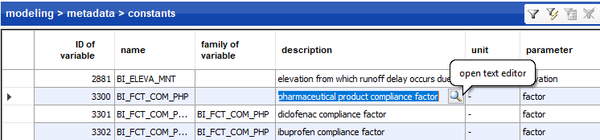

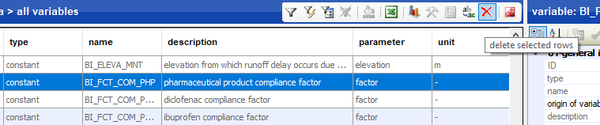

The table all variables lists name, type and reference of all variables and constants as well as the number of formulas they are used in. Variables are classified by their type as constant, spatial, spatial and periodical, point source or periodical point source variables. According to this classification, the variables are listed in separated tables (metadata > constants, metadata > analytical units variables, metadata > periodical analytical units variables, metadata > point source variables and metadata > periodical point source variables) and further attributes like description, family membership, unit, or pathway reference are provided.

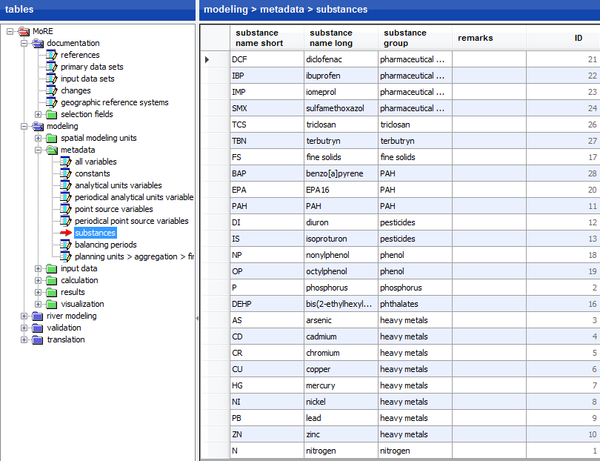

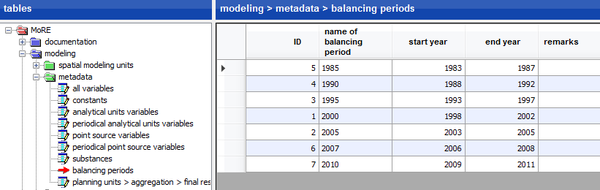

Besides, an assignment of substances to certain substance groups (metadata > substances) and the allocation of years in balancing periods (metadata > balancing periods) are made.

In the submenu planning units > aggregation > final results it can be defined, which results should be aggregated on the level of planning units in order to export the respective results.

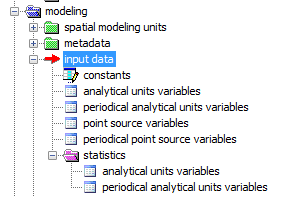

Input data

The object table of the input data contains all (preprocessed) data which are necessary for the modeling. They are usually created from primary data, since input data at the level of analytical units or point sources is needed for modeling. Primary data comprise general and substance-specific data, which may be continuous (e.g. digital elevation models) or discrete, regionalized data (e.g. land use, soil type, topography, atmospheric deposition). Sometimes, primary data are only available as point data but are required as regionalized information, which is why an interpolation with geostatistical methods is performed. Some periodically variable primary data may show gaps in the time course such that periodically interpolation methods are needed. If necessary, primary data have to be aggregated (area weighted) in a last step on the basis of the analytical units in a geographic information system. The input data obtained this way can eventually be imported into the database using the graphical user interface MoRE Developer.

Based on the temporal and spatial variability, input data can be arranged in five groups:

- Constant data (constants)

- Data varying in space (analytical units variables)

- Data varying in space and time (periodical analytical units variables)

- Point source specific data (point source variables)

- Point source specific data varying in time (periodical point source variables)

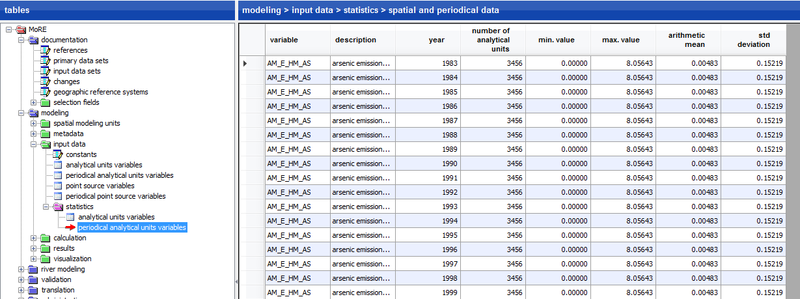

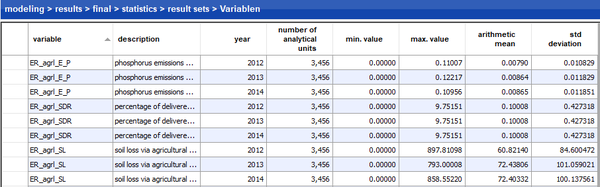

Additionally, MoRE offers the opportunity to statistically analyze analytical units variables as well as periodical analytical units variables. Minimum and maximum as well as the average and the standard deviation are shown as statistical measures in the data grid.

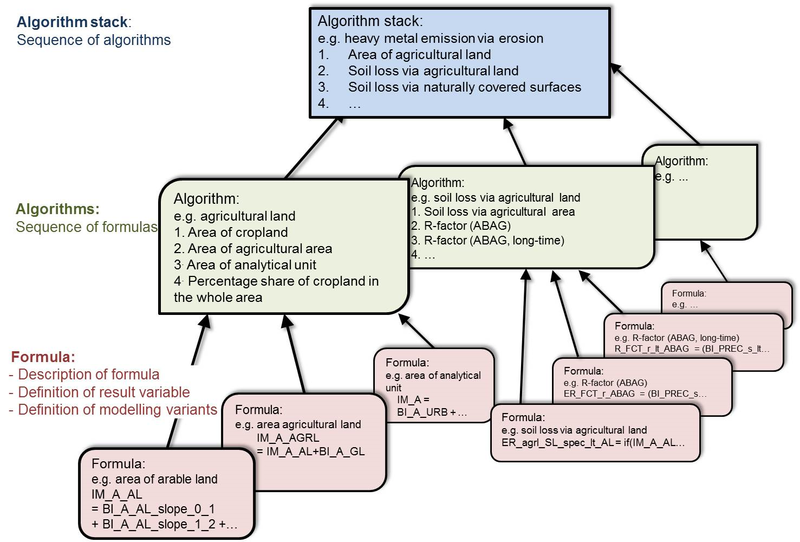

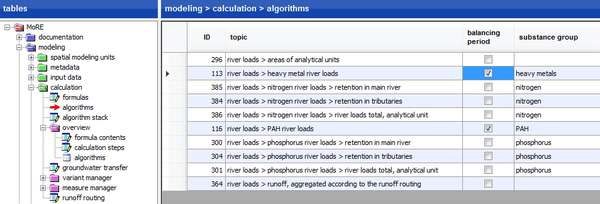

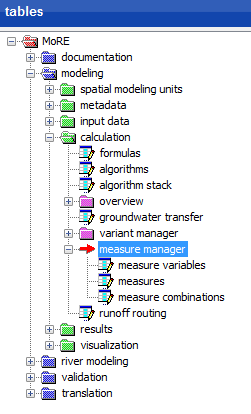

Calculation

The object table calculation contains the model algorithms for the calculation of emissions into surface waters via different emission pathways. An algorithm stack usually represents a balancing approach for a pathway of water or substance flow e.g. “nitrogen emissions via groundwater”. Each algorithm stack consists of one or more algorithms or algorithm stacks. These algorithms consist of a sequence of calculation steps that are represented by individual formulas (according to these calculation steps). The folder overview contains tables of the formulas, algorithms and algorithm stacks in a more clearly arranged way. More details about the implementation of calculation approaches can be found in section (insert reference). The object table groundwater transfer is also filed here. Further, the variant manager and the measure manager as well as information about the runoff routing are available.

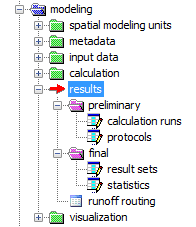

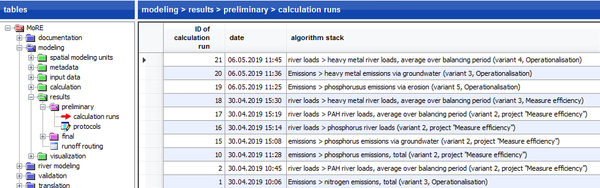

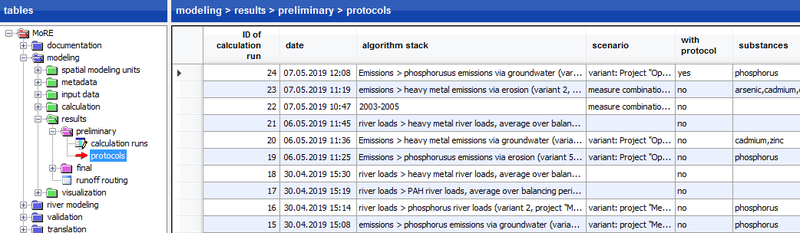

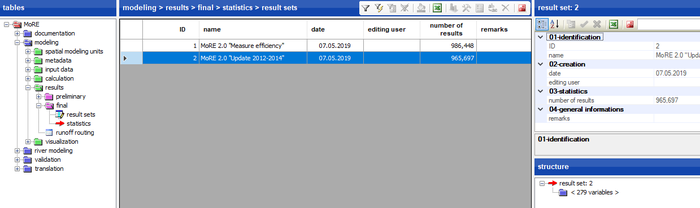

Results

The object table results contains within the subfolder preliminary all generated results as preliminary calculation runs and as a detailed protocol, respectively. In the folder final, all verified results can be stored as results sets as well as statistical data can be found. For management and export of data see the sections of exporting results and saving results in result sets. Further, the runoff routing model with its bifurcations is shown additionally in this folder.

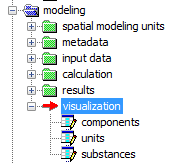

Visualization

In this object table, tables for components, units and substances are filed. By selecting the components and substances with a checkmark, the user can adjust the visualization.

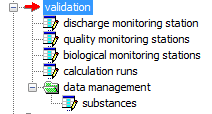

Object table validation

The object table for validation contains daily discharge values of the stream gauging stations, water quality values of the water monitoring stations and the resulting river loads.

Object table translation and administration

The translation and administration modules are only visible to administrators in the PostgreSQL data base. The river basin management system MoRE was optimized for the use in German and English language. A translation run automatically detects newly implemented expressions which do not have a translation in the MoRE dictionary yet. These expressions have to be translated using the translation module to allow translations from the German to the English version and vice versa. In the administration module, several basic adjustments concerning the database source, structure, attributes and display of the object table, user rights, pre-defined filters, trigger of error messages etc. can be made e.g.

Data grid

By clicking on an object table, its content is displayed in the data grid as a table. The content of the data grid and the heading of the window correspond to the data record selected in the data grid. Clicking on the left margin of the data grid selects a data record in the object table listed. The selected data record is highlighted with blue color. The toolbar enables the use of filters for selecting the data sets.

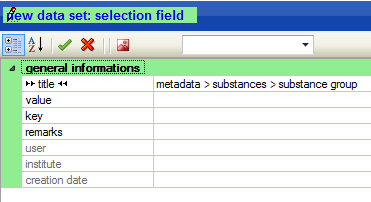

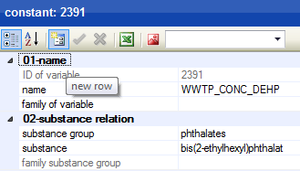

Attribute window

In the top right window, further details to the selected entries of an object table are displayed. The heading and content of the window correspond to the data record selected in the data grid. New data records may be created and edited in the attribute window.

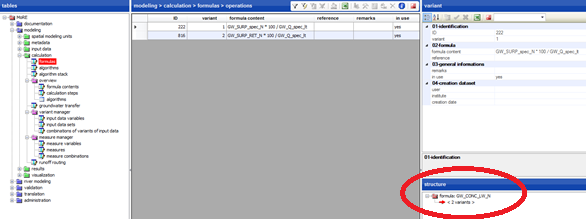

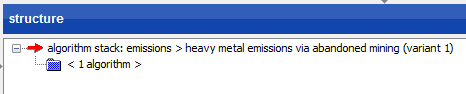

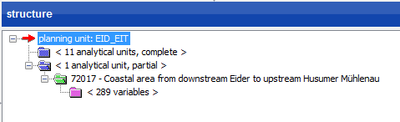

Structure window

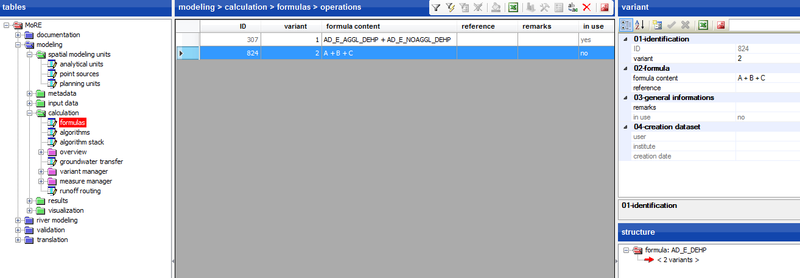

In the bottom right window, the structure of the data record selected in the data grid is displayed. This is especially helpful for the submenu entries from the object table calculation. If the table formulas is selected, the variants of the formulas are displayed in the structure window. If the object table algorithms is selected, the structure window shows the individual calculation steps and if the table algorithm stacks is selected, the individual algorithms are listed. To obtain further details of the shown entries in the structure window, one can click on these entries (< n variants >, < n calculation steps >, < n algorithms >). Then further information is displayed in the data grid.

The structure window is also relevant for the object tables modeling > visualization as well as modeling > spatial modeling units.

MoRE Developer toolbars

In MoRE, two toolbars are implemented which allow interaction with the database as well as creating and editing data records. In addition, the view of the data grid and the attribute window can be adapted. Lists can be exported to Excel. Finally, the calculation can be launched via the toolbar.

Toolbar in the data grid

MoRE has a toolbar in the data grid which offers different tools depending on the object table selected.

These include the following functions:

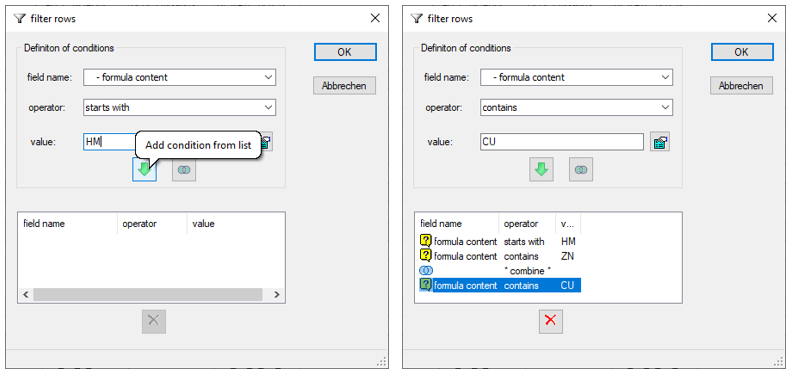

filter rows: data records can be filtered by certain criteria

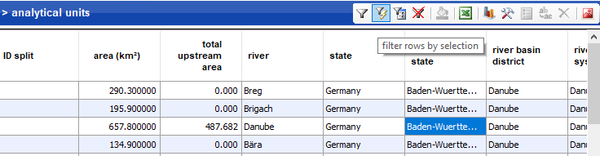

filter rows: data records can be filtered by certain criteria filter rows by selection: filter data sets by certain criteria within a column

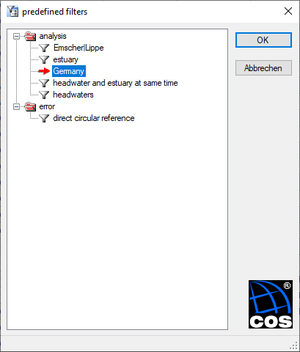

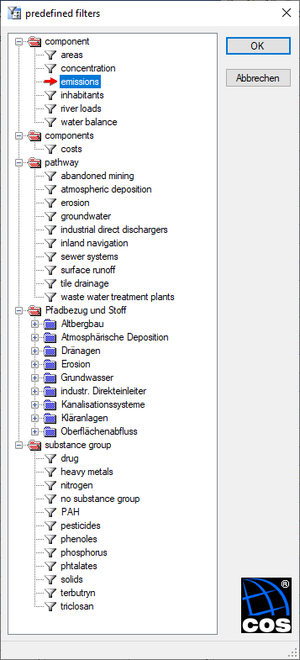

filter rows by selection: filter data sets by certain criteria within a column predefined filter: filter by common selection criteria

predefined filter: filter by common selection criteria clear filter: previously set filters are removed and all records are shown

clear filter: previously set filters are removed and all records are shown writing table to Excel: exports the content of the data grid to MS Excel

writing table to Excel: exports the content of the data grid to MS Excel writing statistics to Excel: exports statistical data on analytical units, planning units and point sources as a table and as diagram to Excel

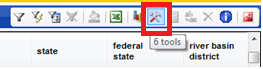

writing statistics to Excel: exports statistical data on analytical units, planning units and point sources as a table and as diagram to Excel tools: execute certain special functions of the calculation engine

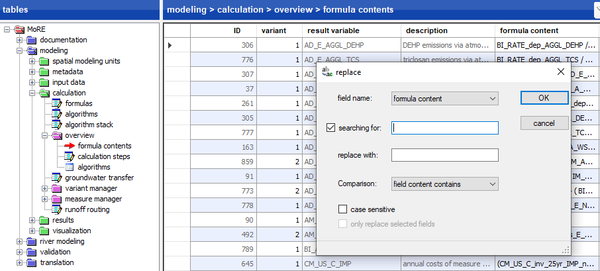

tools: execute certain special functions of the calculation engine replace: edit or replace several entries at the same time

replace: edit or replace several entries at the same time delete selected rows: delete a data set

delete selected rows: delete a data set

If a tool is available or not (then shown grayed out) depends on the object table selected. Via the special function TOOLS, different functionalities are available in the individual object tables.

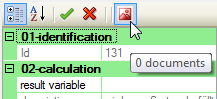

Toolbar in the attribute window

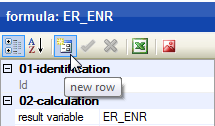

In the attribute window, another toolbar is implemented. This toolbar includes the following functions:

categorized: arrange the entries in the attribute window

categorized: arrange the entries in the attribute window new row: create, accept or discard new data sets

new row: create, accept or discard new data sets writing properties to Excel: export the content of the attribute window

writing properties to Excel: export the content of the attribute window documents: upload pdf-Documents related to the data sets

documents: upload pdf-Documents related to the data sets

Toolbar in the title bar

The toolbar in the title bar contains the following tools:

open parameter database: Developer’s tool with access to the data base (not available for users due to password protection)

open parameter database: Developer’s tool with access to the data base (not available for users due to password protection) check parameters: SQL, which fill tables, check and update data types if necessary

check parameters: SQL, which fill tables, check and update data types if necessary rebuild tree structure of tables: updates the tree structure view

rebuild tree structure of tables: updates the tree structure view logged in as administrator: allows to log in as administrator, if the admin entity of the model is used

logged in as administrator: allows to log in as administrator, if the admin entity of the model is used about MoRE: Information about version and development of MoRE

about MoRE: Information about version and development of MoRE MoRE manual: The current manual is opened as a PDF file

MoRE manual: The current manual is opened as a PDF file

Some of these tools are only available, when the user is logged in as administrator. Unavailable tools are shown grayed out.

Implementaion of input data and modeling approaches

Using MoRE Developer, one can calculate emissions and river loads for both already implemented analytical units with the standard input data and modeling approaches or with other analytical units and point sources. If other (often more detailed) input data are available, these can be imported to MoRE and used as a variant of input data for modeling. Depending on the data basis, modeling approaches may be adjusted and stored as a variant next to the basic variant. Thus, this allows calculating emissions via a pathway in different variations with distinct input data sets or diverse modeling approaches. The achieved results can be compared to evaluate the quality of the input data and modeling approaches.

Implementing a spatial basis for modeling

Implementing new analytical units

If another spatial basis for modeling aside from the one already embedded is desired, other analytical units have to be implemented in MoRE. For example, smaller units as well as river basin districts outside of Central Europe can be embedded. First, these new hydrological catchments have to be derived via a GIS routine (e.g. ArcHydro or other watershed delineation tools). The import of new analytical units works similar to the import of input data.

Preparation of the import files in Excel

For the import of input data to MoRE a template file is available (“MoRE_import.xls”), which is delivered with the system. The file contains a spreadsheet for the import of the catchments (“analytical units”).

Import into the system

If the object table modeling > spatial modeling units > analytical units is selected, new analytical units can be integrated in MoRE via the special function in the toolbar of the data grid (TOOLS → INPUT DATA → IMPORT) by choosing the prepared Excel file. The box “overwriting existing values” is checked by default. By clicking on “start data import”, the data are imported. Please note: Analytical units in MoRE may be deleted from the object table spatial modeling units > analytical units using the tool DELETE SELECTED ROWS. If necessary, the stored input data have to be deleted additionally from the object table input data > analytical unit variables or input data > periodical analytical unit variables (TOOLS → MORE → DELETE INPUT DATA)

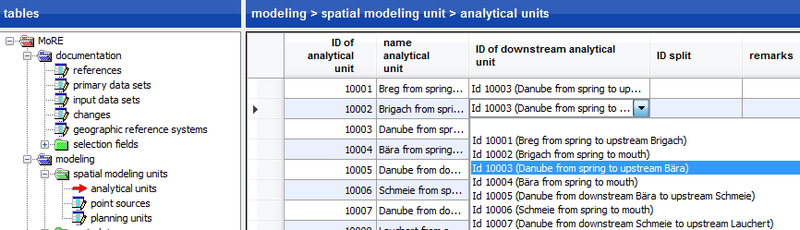

Creating a new runoff routing model

All calculations in MoRE are performed on the level of analytical units. For the aggregation of river loads within the water system, a runoff routing model is needed. It represents the flow direction of every individual analytical unit (for further details see Fuchs et al. (2010) [1], S.8). To derive a runoff routing model, every analytical unit has to be assigned a downstream analytical unit. Usually this is done in a preprocessing. If so, the runoff routing model might exist as a spreadsheet file, which can be imported into MoRE. Sometimes it may occur that one analytical unit drains into two different downstream analytical units (e.g. when there are channels). For this case, the field ”Id Split” was created. This field shows the ID of the second downstream analytical unit in case of a branching (“splitting”). If there is no branching, the field remains empty. In order to distribute the proportions onto the two downstream analytical units, the variable RM_FCT_Q_SPLIT was introduced. When calculating the load along the runoff routing, the load is multiplied in the case of a first-order branching with (1 - RM_FCT_Q_SPLIT) and in the case of a second-order branching with RM_FCT_Q_SPLIT. When dealing with a small number of changes of already existing analytical units, these changes may be done manually in the object table spatial modeling units > analytical units. For manual entries, the writing mode has to be active. Then, the respective areas are selected in the columns “ID of downstream analytical unit”and “ID split”.

If a different topology should be used for modeling, this has to be imported in MoRE first. For this, the topology has to be saved in the import template file (spreadsheet “analytical units”) and subsequently imported in MoRE.

At least one analytical unit has to be selected for creating the new runoff routing model via the special function TOOLS → RUNOFF ROUTING → CALCULATE. The result will be shown in the object table results > runoff routing.

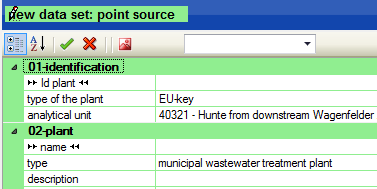

Implementing new point sources

The point sources are entered in the object table modeling > spatial modeling units > point sources. There are two options for implementation of point sources. The first option is a manual entry by activating the writing mode and creating a new data set in the attribute window . The “ID” and the “type” of point source are mandatory fields. Necessarily, the analytical unit has to be selected, in which the point source is located.

In order to start the calculation run for a point source, it is essential to define a validity period. Please note the syntax of the validity. The starting and terminal year are separated by a hyphen, individual years are separated using commas.

This way of implementing a point source is applicable, when individual plants should be added to the entire data collective.

| Id plant | ... | validity |

| 1 | ... | 2006-2011 |

| 2 | ... | 2006-2009, 2011 |

| 3 | ... | 2006, 2008-2009, 2011 |

When there are no data in the system yet and a long list of point sources has to be imported, the implementation should be performed using the special function TOOLS → IMPORT INPUT DATA in the object table spatial modeling unit > point sources. For this purpose, the spreadsheet “point sources” of the import template file must be filled and imported. The import of point sources works similar to the import of analytical units. Filling the columns “id point sources”, “type of point source”, “validity” and “ID of analytical unit” is mandatory.

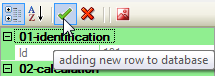

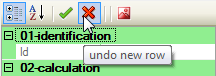

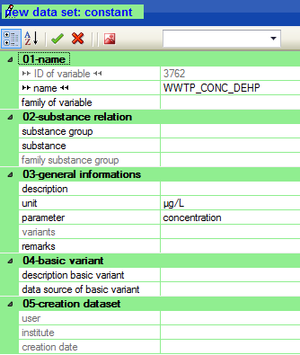

Create new data sets

The basic procedure for creating new data sets is as follows:

- Activate the writing mode

- Select the according object table in the left window. It will become visible in the data grid.

- Create a new data set using the toolbar of the attribute window.

- Fill in all needed information.

- If applicable, attach documents with additional information, e.g. flow charts showing the calculation steps, excel files, R-scripts or papers.

- Transfer the data record to the database or discard the record.

Implement new input data in MoRE

Before implementing new input data in MoRE, the respective variables have to be created in the system. Subsequently, the input data can be imported to MoRE. New substances, substance groups or units have to be added to MoRE before the variables are created to enable the correct assignment. Further metadata of the variables can be added the same way as substance groups and units are added in the object table documentation (e.g. components, emission pathways – here as specification or parameter).

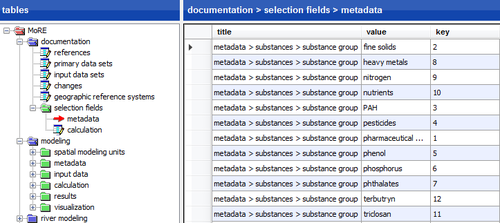

Creating new substances groups and substances

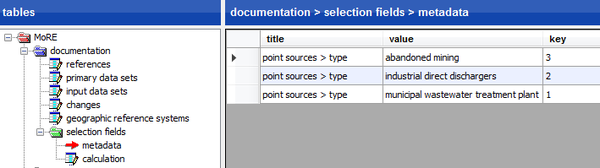

New substance groups can be added as a new data set in the object table documentation > selection fields > metadata. In the data grid all previously entered substance groups are shown in the column “title” under the term “metadata > substances > substance group”.

By adding a new data set, a new substance group can be created. Please note that the name of the new substance group is entered under “value” and a unique “key” is provided e.g. as a consecutive numbering.

Subsequently, the new substances belonging to the substance group can be added in the object table modeling > metadata > substances. Please note that “substance name short” can only contain upper- and lower case letters. The ID of the substance is automatically complemented by a consecutive numbering.

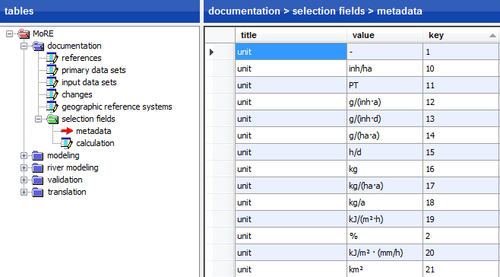

Creating a new unit

Within the object table documentation > selection fields > metadata, a unit can be created following the routine described above for substance groups. Enter the word “unit” as the “title” and the actual unit as “value”. Please note to provide a unique “key”, e.g. as a consecutive numbering.

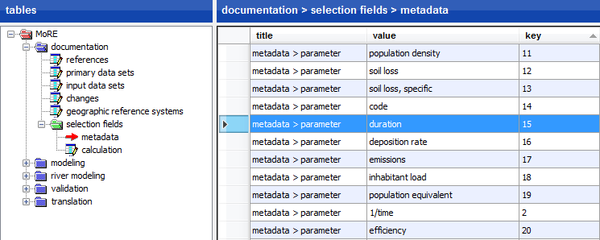

Creating new parameter

Using the object table documentation > selection fields > metadata, a parameter can be created following the routine described above for substance groups. Enter the term “metadata > parameter” as the “title” and the actual parameter as “value”. Please note to provide a unique “key”, e.g. as a consecutive numbering.

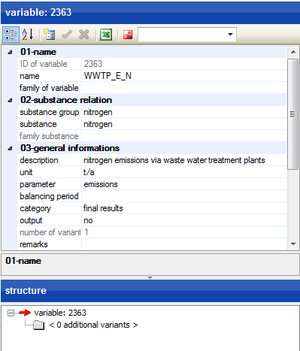

Creating new variables

If a new variable is created, its name should comply with the nomenclature of the variables and further information (such as type of variable, unit, parameter, source, and corresponding substance and emission pathway) have to be added as metadata. Depending on the type of metadata, either a free text or an accordant entry of a selection field (defined under the object table documentation > selection fields > metadata) can be chosen.

Creating a variable in the metadata

A new variable has to be created in the object table modeling > metadata – depending on the type of variable – in the submenus under constants, analytical units variables, periodical analytical units variables, points source variables or periodical point source variables. The creation of the new dataset is done as described above. An example for creating a new constant is shown here:

When creating a new data set, the name of the new variable (according to the nomenclature) and, if necessary, the variable family is entered under 01-name. Subsequently, the fields of 02-substance relation, 03-general information and 04-basic variant are filled and the data set is added to the database. It is important to select a category. This can be input data, intermediate results or final results.

The description at 03-general information should be valid also for further variants of the variable that might be created in future. In case, further variants of a variable are created, it is recommended to describe the current basic variant under point 04-basic variant.

After creating a new variable, it should be checked if it can be found in the respective object table. The constant from the example can be found under the path modeling > metadata > constants.

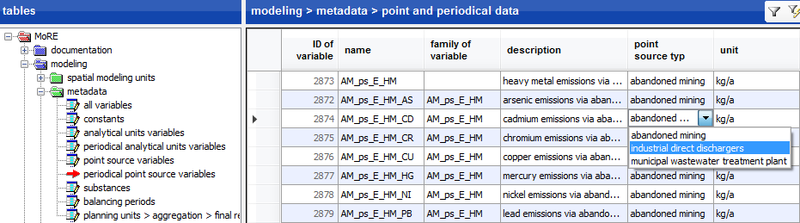

Creating point source variables

In MoRE, the first step is the definition of point source types. This can be done in the object table documentation > selection fields > metadata. As “title” the term “point sources > type” is entered. For “value” the actual name of the point source type is given. Further, a unique “key” for each point source type has to be given, whereby the first key starts at 1. The fields “value” and “key” cannot be empty. It is important that the defined point source types match the entries in the object tables modeling > input data > point source variables and periodical point source variables, respectively.

As the next step, the new variable can be created and defined in the object table modeling > input data > point source variables or periodical point source variables, respectively. At this point, it is important to select the appropriate point source type, which was defined before (see above). Further, there can be family variables, descriptions and units as well as substances and substance groups assigned.

Create family variables

The creation of a family variable is advised, if the same modeling approach is used for several substances. Then, the formulas have to be entered only once for the family and are used for calculating emissions for all family members. Up to now, this has been realized in MoRE for the groups of heavy metals and polycyclic aromatic hydrocarbons (PAH). The family variable always has the less specific and thus higher-ranked name. The names of the families end with _HM (heavy metals). If a family is named e.g. AD_RATE_HM (deposition rate of heavy metals), the individual members (in this case the specific heavy metals) could be named as follows: AD_RATE_HM_CD, AD_RATE_HM_CR etc. Family variables are created like variables. If individual members are created, their family variable has to be specified additionally in the metadata (at 01-name).

Import of new data to MoRE

Please note: The import of values for a variable is only possible after creating and defining a variable in the metadata.

Preparation of the import files in Excel

For the import of new data into MoRE, a template file is available (“MoRE_import.xls”). This file contains spreadsheets for the import of analytical units, rivers, gauging stations and quality monitoring stations as well as the different types of variables (constants, analytical units variables, point source variables). The file is delivered with the system.

MoRE is able to import several variables in one operation. However, these have to be of the same type. This is the reason why in the import file only one spreadsheet can be filled out. Unused spreadsheets are not allowed to contain any entries except for the headings.

A constant is characterized by having the same value for many or all analytical units. Thus it is mostly sufficient to enter the name of the constant, its value and its reference in the import file.

Analytical units variables may have different values for each analytical unit, but no reference in time. An example is the size of the analytical unit. It is therefore sufficient to enter a row with the variable's name, its value and its reference for each analytical unit (allocable via ID) in the import file. Summed up, there are 3456 rows for all 3456 analytical units or accordingly less if input data for fewer analytical units (e.g. only for Germany) are imported. This is similar for the point source variables, whereby the number of rows of the import file matches the number of implemented point sources.

Periodical analytical units variables have different values for each analytical unit as well as for each year. Hence, for every analytical unit the year, the name of the variable, the value of the variable and the reference is needed. Similarly, periodical point source variables have different values for each point source (allocable via ID) and for each year. Thus, for each point source and year a own row with variable name, value and reference is needed in the import file.

Please note: It is possible to create variants of variables in MoRE to model different scenarios. Hence, for all input data the number of the variant has to be assigned in the import file.

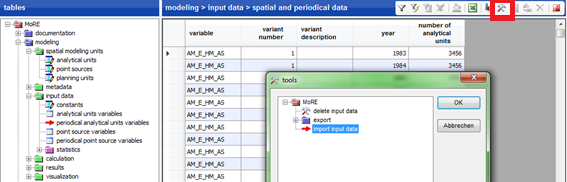

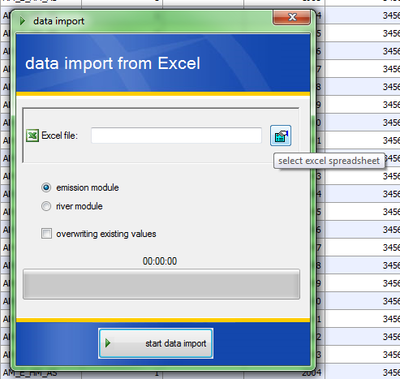

Import into the system

Analytical units and point sources are imported in the object table modeling > spatial modeling units > name of variable type. In contrast, analytical units variables and point source variables are imported via the object table modeling > input data > name of variable type. Using the toolbar in the data grid the special function TOOLS → MORE → IMPORT INPUT DATA can be activated and new data can be imported into MoRE.

Choose the prepared Excel-file:

The box “overwriting existing values” is checked by default. By clicking on “start data import”, the data is being imported.

Data that has already been imported may be deleted again from the database via the special function TOOLS → MORE → DELETE INPUT DATA

Implementation of new modeling approaches

The modeling approaches in MoRE are stored in a database and are interpreted, together with the required input data, by the calculation engine during a modeling process. Components for modeling are inhabitants, areas, runoff, emissions, river loads as well as costs. These are usually calculated using empirical equations which can be entered in MoRE as plain text. The modeling is based on three levels. The smallest defined unit is the formula (equation). The aggregation of multiple formulas in a certain order is called an algorithm. Multiple algorithms in a defined order form an algorithm stack. The algorithm stacks usually represent a component and its runoff components and paths, respectively. All modeling approaches are listed in the object table modeling > calculation.

When a new approach is created, the individual calculation steps should be defined on a flow chart first. This can serve as guidance and as a template for the implementation in MoRE and can be stored as a document together with the algorithm stack. Flow charts were created for the implemented algorithm stacks in order to gain high transparency and a good overview over the approaches which are used for modeling the individual components in MoRE.

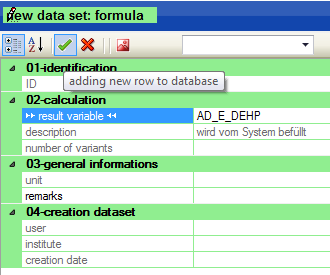

Create formulas

First, the result variable of a formula has to be created. When creating the new variable it is important to define the category either as intermediate result or final result. For variables that are assigned as input data, no formulas can be defined. Subsequently, a new data set can be created in the object table modeling > calculation > formulas. In this step, the previously defined result variable is selected. The new data record has to be added to the database.

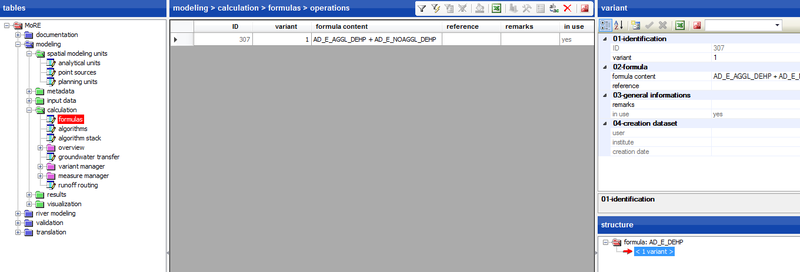

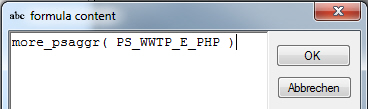

In the next step, the formula content for the calculation of the variable is entered. For this, one has to click on <1 variant> (in this example) in the structure window.

In the structure window it is counted how many formulas (data sets) are available for each variable. The term <0 variants> means that there is no formula yet. The term <2 variants> refers to two formulas that already exist for the selected variable.

By clicking on the number of variants, a table with the formula contents is displayed in the data grid.

To build a new formula, a new data set has to be created. In the attribute window, the formula is typed as plain text in a text editor window under “formula content”.

The formula has to be added to the database and is appearing subsequently in the data grid.

Knowledge of a programming language is not necessary to use the interface. For good readability, it is recommended to enter spaces between the individual signs and operators of the formula. Please take into account the operators for creating formula contents.

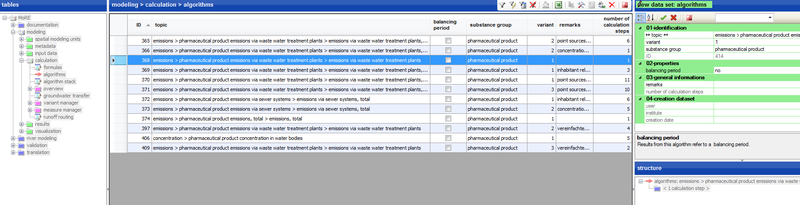

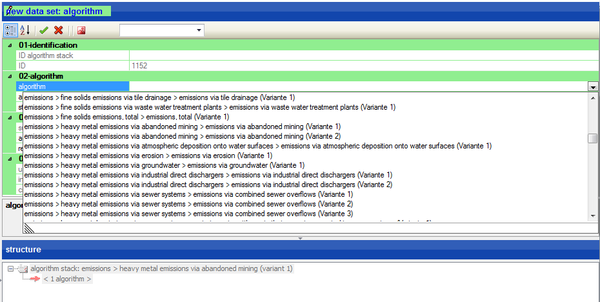

Creating algorithms

After entering a formula, a corresponding algorithm can be defined. The object table modeling > calculation > algorithms is selected and a new data set is added to the database. When indicated, a reference to a substance has to be chosen and it has to be defined, whether the calculation should be performed for balancing periods. As example the algorithm “emissions > pharmaceutical product emissions via waste water treatment plants > emissions via waste water treatment plants” is created. Subsequently, calculation steps have to be assign to the algorithm in a defined order.

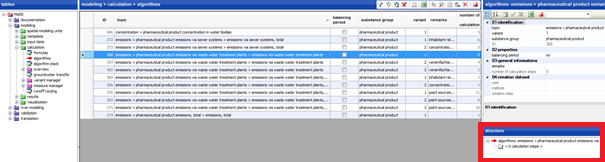

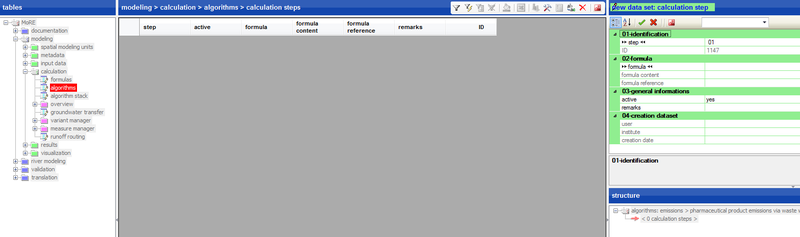

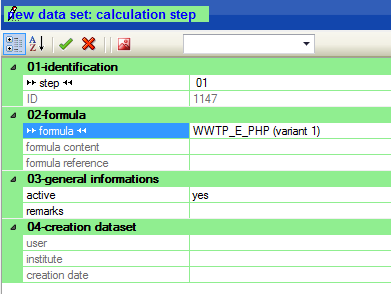

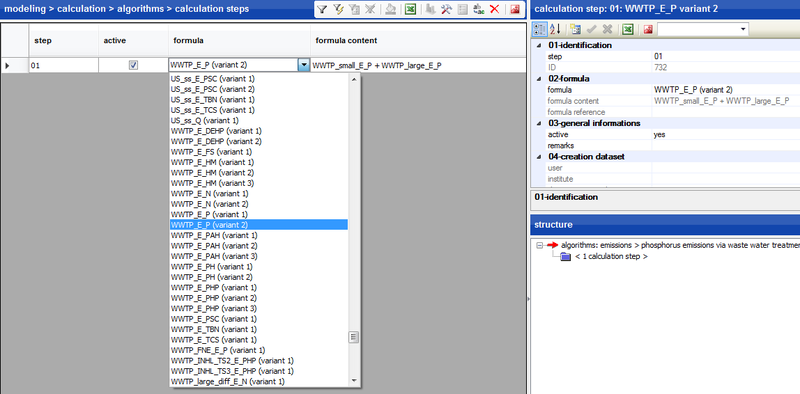

Creating calculation steps

In the data grid of the object table modeling > calculation > algorithms all previously implemented algorithms are listed. The desired algorithm is selected. The structure window shows additional information about the selected algorithm. To assign calculation steps to the algorithm click on <0 calculation steps> in the structure window.

The appropriate table opens in the data grid and in the attribute window a new data set can be created.

Then, the desired formula can be entered. In the example, the formula “WWTP_E_PHP (variant 1)” is selected. The corresponding formula content is then automatically assigned.

The order of the calculation steps may be changed later with the help of the special function TOOLS → MORE → RENUMBER ALGORITHM

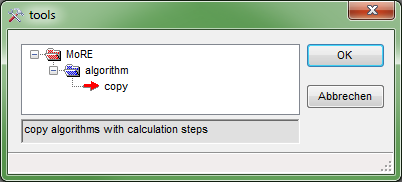

Copying algorithms

When working with MoRE, it might become reasonable to save time by copying algorithms. Algorithms that are similar to each other or meant to exist in different variants (e.g. for different substances), may be multiplied that way without much effort. The copy tool creates an exact copy of the algorithm. To avoid confusion, the name of the copied algorithm starts with "_copy n" followed by the original name of the algorithm. However, this name may be changed arbitrarily. To copy an algorithm, the accordant algorithm is selected and the special function TOOLS → ALGORITHM → COPY from the toolbar is used.

Since the listed algorithms are displayed in alphabetical order by default, the created copy of the algorithm is not displayed next to the original but at the top of the table (starts with "_copy n").

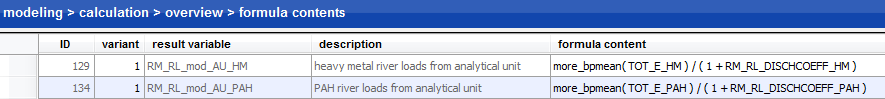

Creating algorithms for balancing periods

First and foremost, balancing periods are designed to calculate river loads. The function “more_bpmean” calculates the mean for variables in the desired balancing period. The function “more_bpmean” is integrated in any formula as a calculation operation.

Before modeling with balancing periods, these have to be defined under modeling > metadata > balancing periods.

For all algorithms and algorithm stacks that are related to balancing periods and that uses the function “more_bpmean”, the column “balancing period” in the object table modeling > calculation > algorithm or modeling > calculation > algorithm stack, respectively, has to be activated.

The results are created analogously to the general approach using a SPECIAL FUNCTION.

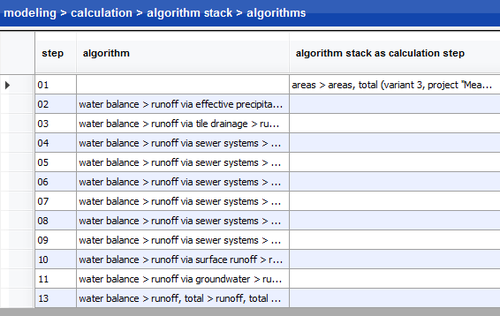

Create algorithm stacks

Algorithm stacks in general represent an accounting approach for an emission pathway of water or substance flows. They can be composed of one or more algorithms (and algorithm stacks). The order of the algorithms taken into account is essential for a correct execution. A new algorithm stack is created corresponding to the procedure described above. If necessary, a reference to a substance and balancing periods have to be defined. Subsequently, individual steps in the form of algorithm have to be assigned to the algorithm stack. This can be done by clicking on < 0 calculation steps > in the structure window.

After that, a new data set can be created in the attribute window. In the field “02-algoritm” the corresponding algorithm is selected from a dropdown menu. The number of the particular calculation step is assigned automatically in the field “step”. It is also possible to change the sequence of steps and to renumber the steps. In a last step the new data record is added to the database by accepting the settings.

Please note: An algorithm stack may consist of other algorithm stacks that have been created earlier. They can be selected under “02-algorithm” in the field “algorithm stack as calculation step” from the list of available algorithm stacks. As an example, the algorithm stack areas > areas, total is the first calculation step in the algorithm stack water balance > runoff, total; it exists as well as autonomous algorithm stack for the independent calculation of the areas.

Algorithm stacks deposited in MoRE

In MoRE, the following algorithm stacks (possibly in different variants) are implemented:

- Inhabitants

- Areas > areas, total

- Water balance > runoff, total

- Emissions for all emission pathways and substance groups:

- wastewater treatment plants

- industrial direct dischargers

- abandoned mining (only heavy metals)

- atmospheric deposition

- tile drainage

- erosion

- groundwater

- sewer systems

- surface runoff

- inland navigation (only PAH)

- emissions, total

- River loads for nitrogen, phosphorous, heavy metals and PAH

- Yearly costs for the measure:

- sealant removal

- increase connection rate

- new SOT (storm overflow tanks)

- operation improvement WWTP in regard to N

- operation improvement WWTP in regard to P

- retention soil filter

Apart from these algorithm stacks, any desired further algorithm stack can be created by the procedure mentioned above.

Implementation of modeling approaches with point source references

The procedure for implementing modeling approaches with point source reference is equal to that of the level of analytical units. The modeling approaches for point sources are created in the object table modeling > calculation > formulas as well. These are stored in the data base and interpreted together with the required input data by the calculation engine during the modeling. All details regarding the creation of formulas, algorithms and algorithm stacks have been described earlier. The desired result variable has to be created as a periodical analytical units variable only. Algorithms on the level of point sources can be integrated into algorithms on the level of analytical units, if the last calculation step of the algorithm includes the aggregation of the results for the individual point sources to the level of the analytical units. For this purpose, the special function “more_psaggr()” is used. The brackets should specify the point source variable that is summed up to get cumulative values for the analytical units.

This function can also be used for variables of the category input data, preliminary results and final results. The variable to be aggregated has to be a point source variable or a periodical point source variable, respectively. The values for the individual point sources are only exported as results when this option was chosen before (via the object table modeling > metadata > point source variables / periodical point source variables in the column “output”).

Generate and export results

MoRE executes calculations on the basis of previously defined algorithm stacks. The results of a calculation run are saved in the object table modeling > results > preliminary > calculation runs. As described above, the last step of an algorithm stack can be saved and exported as final result of a calculation run or as detailed protocol with all calculated variables.

Execute calculation runs in MoRE

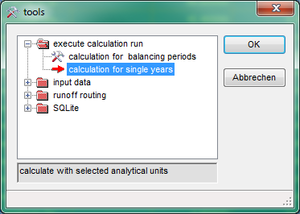

Before a calculation, the wanted number of analytical units (e.g. 10040 to 10060) has to be selected in the object table modeling > spatial modeling units > analytical units. Selecting the desired units can be facilitated by filters. The calculation is then performed for these analytical units.

Calculation for individual years

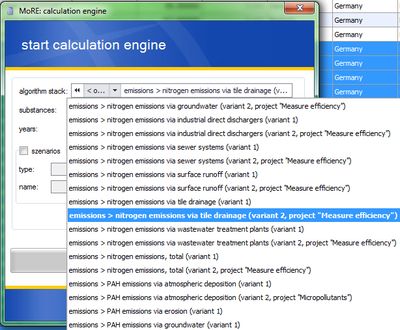

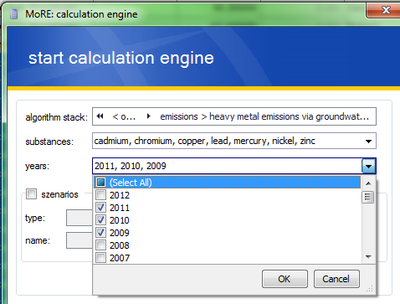

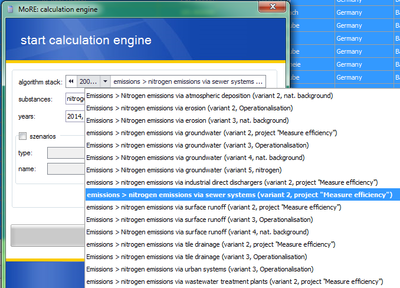

All algorithm stacks that refer to inhabitants, areas, water balance and emissions can be calculated on the basis of individual years. For this purpose, select the analytical units to compute for and activate the special function from the toolbar TOOLS → EXECUTE CALCULATION RUN → CALCULATION FOR SINGLE YEARS. The window MoRE: calculation engine opens for the calculation and lists all algorithm stacks that can be calculated for single years. Here, one can select the algorithm stack, the substances and the individual years. The calculation run is started, by clicking on the button START CALCULATION ENGINE:

(I) Starting special function TOOLS

(II) Selecting special function TOOLS → EXECUTE CALCULATION RUN → CALCULATION FOR SINGLE YEARS

(III) Selecting the algorithm stack

(IV) Selecting the years

(V) Executing the calculation run

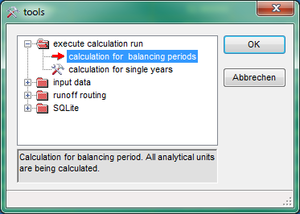

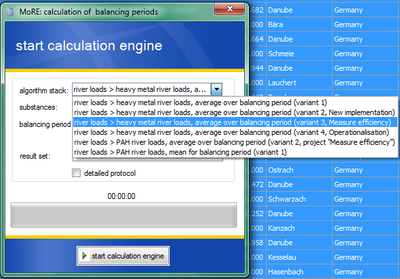

Calculation for balancing periods

The algorithm stacks for river loads are calculated on the basis of defined balancing periods. Next to the balancing periods implemented in MoRE, own balancing periods of any length can be created. For the calculation of the river loads, the total emissions are calculated for each analytical unit and reduced by a retention factor before summing up according to the runoff routing. A mean value of the yearly loads is calculated for the balancing period.

Calculation runs for balancing periods are executed similar to the ones for single years. After selecting the analytical units (object table modeling > spatial modeling units > analytical units), the special function TOOLS → EXECUTE CALCULATION RUN → CALCULATION FOR BALANCING PERIODS in the toolbar is activated. A window MoRE: calculation of balancing periods opens, which lists all available algorithm stacks. Here, the desired algorithm stack, the substances and the balancing period have to be selected. The calculation will start, when clicking on the button START CALCULATION RUN. When final result sets exist, it can be chosen, if the river loads are calculated on basis of these already available results or on basis of newly calculated emissions.

(I) Starting special function TOOLS

(II) Selecting the special function TOOLS → EXECUTE CALCULATION RUN → CALCULATION FOR BALANCING PERIODS

(III) Selecting the algorithm stack

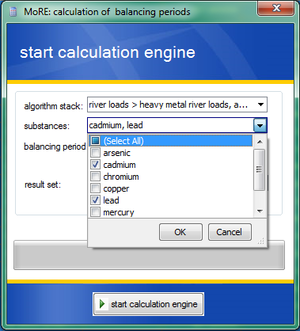

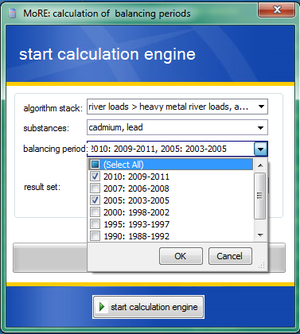

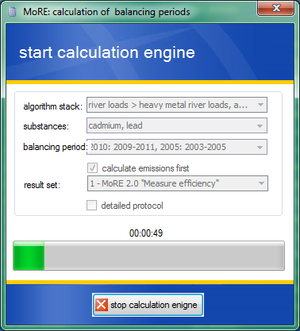

(IV) Selecting the substances

(V) Selecting the balancing periods

(VI) Executing the calculation run

Calculation run vs. detailed protocol

By default, executing a calculation run in MoRE carries out all formula contents, but only writes defined variables and their values to the result table. This minimizes the computing speed. The results of the calculation runs are listed in the object table modeling > results > preliminary > calculation runs. If the output of additional variables is desired, these can be selected in the object table modeling > metadata for the corresponding variable type (periodical analytical unit or periodical point source variables) in the column “output”. MoRE offers the possibility of creating a detailed protocol for a calculation run. In such a detailed protocol, every input variable and every generated intermediate and final result are listed with its value as well as all used formulas of the calculation run. However, the computing speed is reduced considerably when a protocol is generated. Thus, it is recommended to execute a calculation run with a detailed protocol for only a few analytical units following the implementation of new modeling approaches. Then, protocols can be used to detect errors and to verify calculation approaches.

Calculations after changes in the system

To execute modified calculations with MoRE, the necessary steps for the creation of new variables, input data, formulas, algorithms and algorithm stacks have to be taken. This may either be accomplished by creating the mentioned MoRE-components or by the creation of different variants.

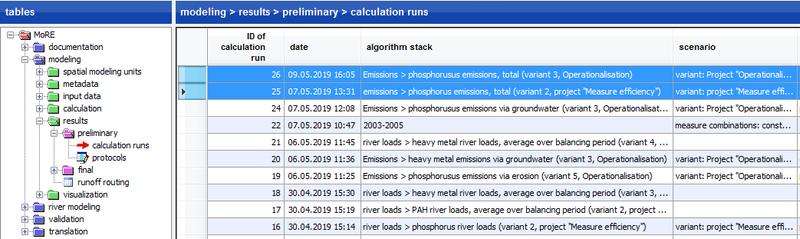

Export results

Results are either saved as a calculation run or as a detailed protocol in the object tables results > preliminary > calculation runs and results > preliminary > protocols. They are displayed with a consecutive ID in the data grid.

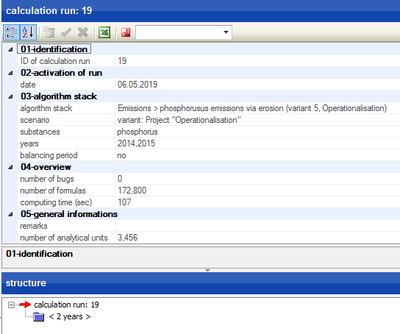

For a selected calculation run, more detailed information is shown in the attribute and structure windows.

Export of calculation runs

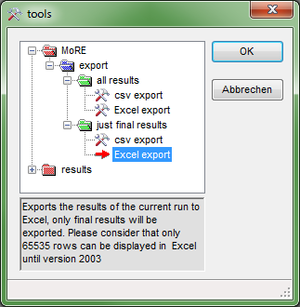

Calculation runs are displayed in the object table results > preliminary > calculation runs in the data grid. To export the results, the desired calculation run needs to be selected and in the toolbar TOOLS → MORE → JUST FINAL RESULTS or ALL RESULTS → EXCEL EXPORT is executed. Here, it is possible to choose if the data should be exported as Excel-file or csv-file.

Please note: It may occur that the data volume to be exported is very large. This produces the following error message:

“Not enough main memory available, please export fewer data sets.”

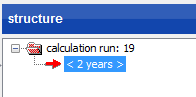

In this case, click in the right structure window on <n years>. In the data grid, the individual years are shown and can be exported individually.

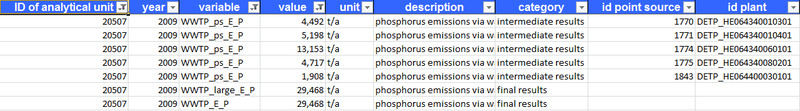

If modeling results with point source reference are exported to Excel, the same sheet will contain the results for each individual point source as well as the sum of all point sources of the same kind within the analytical unit. This allows further calculation with other results on the level of analytical units. The variables with point source reference are additionally marked with the attributes “ID point source” and “ID plant”; when aggregated results for an analytical unit are shown, these columns remain empty.

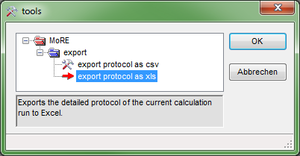

Export of protocols

In the object table modeling > results > preliminary > protocols all calculation runs are listed as well. However, an export of protocols is only possible for calculation runs that have been previously computed with a protocol. If a protocol is available, is assigned in the column “with protocol” with yes or no.

The export of protocols is similar to the export of model results. The desired calculation run (column “with protocol” has to contain a “yes”) is selected and via the toolbar the special function TOOLS → EXPORT → EXPORT PROTOCOL AS XLS is used to export the protocol as Excel file.

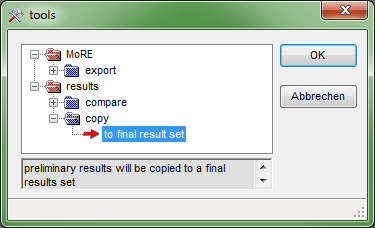

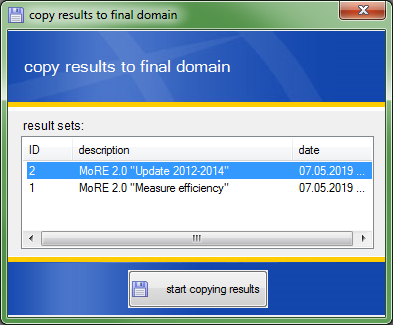

Save results in results sets

Final results can be displayed as result sets in the MoRE Visualizer. In a first step, a new data set has to be created in the object table modeling > results > final > result sets using the writing mode.

Subsequently, the desired calculation run can be selected in the object table results > preliminary > calculation runs. Via the special function TOOLS → RESULTS → COPY → TO FINAL RESULTS a final result set can be selected.The results are then also available in the MoRE Visualizer.

Modeling with variants in MoRE

Computing variants is possible on two levels in MoRE. The user can model on a basis of

- Variants of input data and / or

- Variants of calculation approaches.

For example, the modeling with variants of input data can be used for consideration of data sets of differing spatial resolution. This is done with the so-called variant manager. Variants of approaches are not executed with a tool, but are realized by managing formulas, algorithms and algorithm stacks. For this, different combinations of formulas, algorithms and algorithm stacks can be created. Thus, modeling can be performed using these different approaches. The goal of computing variants is the evaluation and comparison of the available approaches and input data sets for emission modeling.

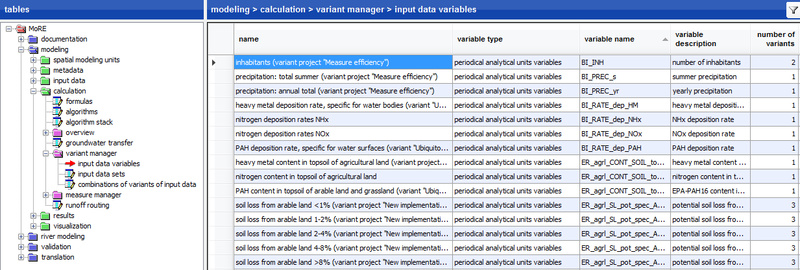

Variants of input data: Variant manager in MoRE

Input data can be derived from raw data of differing spatial and temporal resolution, different preprocessing or origin. They are usually implemented into the model as variables. In order to use several variants of input data in the model without creating new variables, a management tool for input data was implemented in MoRE (variant manager). Next to the basic input data set of a variable, additional variants of input data sets of the same variable can be stored in MoRE. Using these variants of input data, calculation runs can be performed and the generated results can be compared with each other. The variant manager can be found in the submenu modeling > calculation > variant manager and holds three object tables. In these tables, it is defined which variant of input data is used for the modeling.

Concept of the variant manager and implementation in MoRE

Variants of input data can be accounted for in different emission pathways in the model. Additionally, individual input data sets can be linked to variant combinations. For the modeling with variants of input data, the following steps are necessary:

- Creation of a variant for the corresponding variable

- Import of the input data for the variant of the variable (this works like a normal import of input data)

- Definition of the variant in the variant manager:

- Definition of the input data variable and assignment of a variant

- Creation of input data sets and assignment of input data variables

- Combination of different input data sets

Before executing a calculation run, the user defines with which variant combination of input data the model should perform the run.

Important: The variables of variant 1 always needs to be filled with data to perform a model run, even if a different variant is chosen to perform the calculation run.

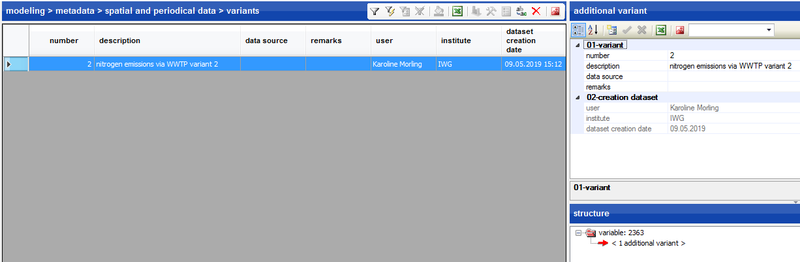

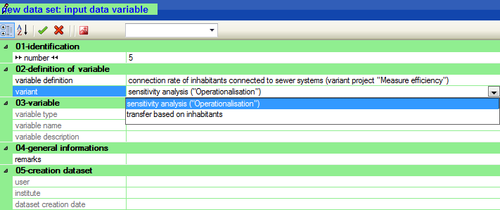

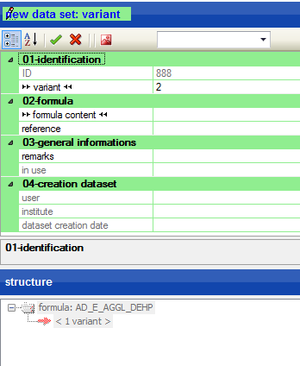

Creating variants for input data

If a basic variant of a variable was already created, further variants can be created in the metadata. Using the writing mode, click on <0 additional variants> in the structure window to create a new variant next to the basic one.

The new variant is automatically assigned with a consecutive number. In this example, the variant gets the number “2”. For the new variant, a description has to be provided. Further, the reference can be entered. After the creation of a new variant, the term <1 more variant> will appear in the structure window.

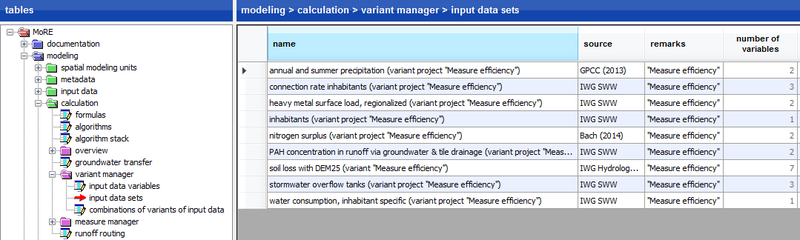

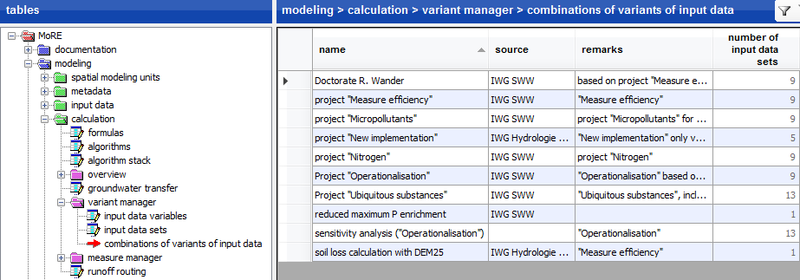

Structure of the variant manager

For practical implementation of the modeling with variants of input data, the variant manager was set in the submenu modeling > calculation. The variant manager contains the following object tables:

- input data variables,

- input data sets and

- variant combinations.

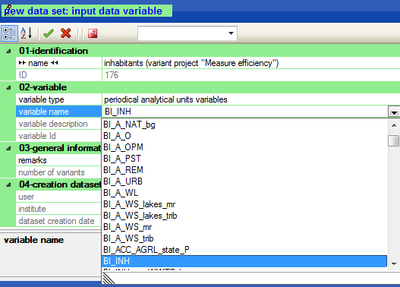

In the object table input data variables, it is defined which variable is used for the modeling with variants of input data. Here, only the variable is selected and the corresponding variant is chosen in the next step.

To add a variable to the variant manager, a new data set has to be created in the input data variables section. The desired variable is selected from a drop-down-menu.

The variables name can be defined here. It is recommended to use the general description of the variables (in the example: “inhabitants”) and to add a conclusive indication of the new variant (in the example: project “Measure efficiency”).

Each input data variable changes exactly one input data set in an algorithm stack. Variants of different origin or different resolution as well as differing preprocessing are possible.

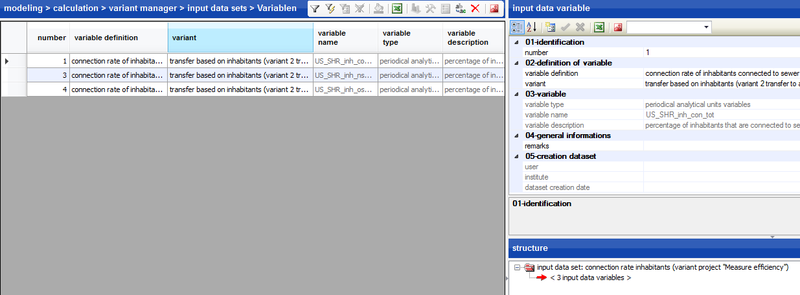

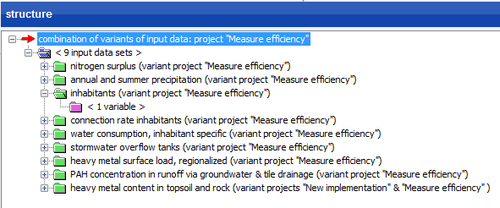

In the object table input data sets, the input data variables are linked to the input data sets.

Each input data set consists of one or more input data variables, in which only one variant of the input data variables can be selected.

An input data set is described by a name, a source and the number of assigned input data variables in the data grid. Detailed descriptions of the single input data variables are given in the structure window.

To assign a variable to an input data set, a new dataset has to be created. In the section 02-definition of variable the desired input data variable is selected from a drop-down-menu.

In the object table variant combinations, individual input data sets can be combined. The data grid lists all variant combinations.

By selecting a variant combination, the individual input data sets assigned to it are shown in the structure window.

The invoked information appears in the data grid. A new variant combination can be created by adding a new data set. Assigning input data sets to a new variant combination takes place via the creation of new data sets and the selection of input data sets from the drop-down-menu as well.

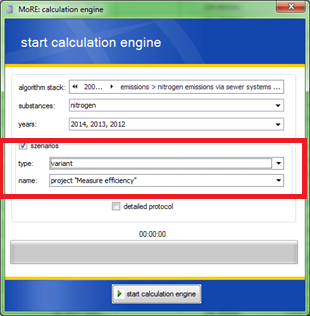

Modeling with variants of input data

In order to model with variants of input data in MoRE, a calculation run has to be started. After selecting the desired analytical units, the special function TOOLS → EXECUTE CALCULATION RUN → CALCULATION FOR SINGLE YEARS is activated.

Before starting the calculation run, the user can define which algorithm stack in combination with which variant of input data is used for the modeling of emissions. For this, a checkmark is set at scenarios and as type the “variant” is chosen, while the name of the desired variant is selected as name.

The results of the modeling can then be compared with the basic variant or with the results from further variant calculations, provided that these have been calculated as well.

Variants of modeling approaches

Similar to input data variants, different calculation approaches can be modeled using a variety of variants in order to compare the respective results. Additionally, it is possible to combine the approach variants with input data variants using the variant manager. This was technically implemented in MoRE as management of calculation approaches (formulas, algorithms, algorithm stacks).

Concept for approach variants and implementation in MoRE

For the modeling with variants of approaches, the following steps have to be performed:

- Creation of one or more variants of formulas or new input data which are needed for the new approach. In this context, the new formula contents are added or copied and modified from already existing formulas.

- If necessary, new input data have to be imported.

- Creation of a new algorithm or copying an already existing algorithm in which the new formula variant should be applied. Within the new algorithm, the desired formula variant is selected as the corresponding calculation step. Thereby, the variant can be combined with other input variables.

- Creation of a new algorithm stack or copying an existing algorithm stack in which the new algorithm variant should be applied. Here, the assignment of the new algorithm takes place at the corresponding position.

Before running a calculation, the correct variant of the algorithm stack with the corresponding variants of formulas has to be selected. The detailed application is described in the following sections.

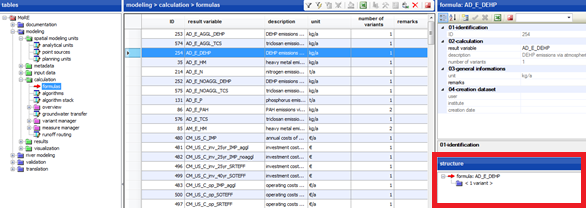

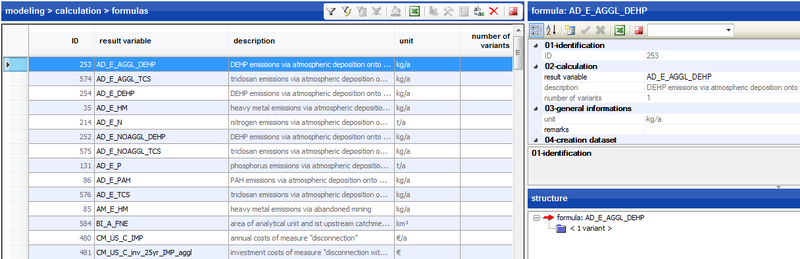

Create variants of modeling approaches

Using variants, it is possible to compare two different calculation approaches. Via the object table modeling > calculation > formulas the formula, for which another variant should be created, can be selected in the data grid. Within the structure window the already existing variants can be selected. In the example, another variant of the variable AD_E_AGGL_DEHP will be created. For this, one has to click on <1 variant>.

Then, a new data set can be created in the attribute window, according to the procedure described earlier. In the field variant the number appears automatically. In this example it is number “2”. Now, the formulas’ content and if needed the reference have to be entered. Subsequently, the data set can be transferred to the database.

Analogously to this procedure, variants can also be created for algorithms and algorithm stacks. The creation and copying of algorithms and algorithm stacks was described in detail earlier.

Structure of approach variants

For modeling with variants of calculation approaches, the corresponding variants of formulas, algorithms and algorithm stacks have to be created first. These represent the new approaches and need to be linked. A new formula variant can be integrated in an algorithm by selecting the variant number. For this, the desired algorithm is selected within the data grid and in the structure window <n calculation steps> is chosen. Using the writing mode, it is possible to select the formula variant from a drop-down-menu. The formula content is added automatically. In the same way, new variants of algorithms can be integrated into algorithm stacks.

After creating and linking all needed formulas, algorithm and algorithm stacks, the new calculation approach can be modeled.

Modeling with variants of calculation approaches

To model variants of calculation approaches in MoRE, a calculation run hast to be started. After selecting the desired analytical units, the special function TOOLS → EXECUTE CALCULATION RUN → CALCULATION FOR SINGLE YEARS in the toolbar is activated. Here, the user is able to define which algorithm stack is used for the modeling of emissions.

At this point, it is also possible to choose the variant of input data or measures for the modeling, provided that these variants have been created before.

The results of the modeling can then be compared to the basic variant or with results from further variant calculations – provided they have been calculated.

Comparison of results

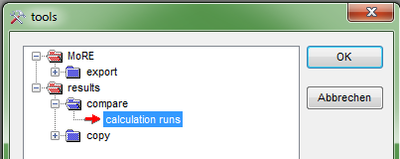

The results of two variants of input data or calculation approaches can be conveniently compared with MoRE. The results of the calculation runs are filed in the object table modeling > results > preliminary > calculation runs. To compare two calculation runs, both are selected in the data grid.

Via the toolbar the special function TOOLS → RESULTS → COMPARE → CALCULATION RUNS is activated. By clicking “OK”, the results of the two selected calculation runs will be compared.

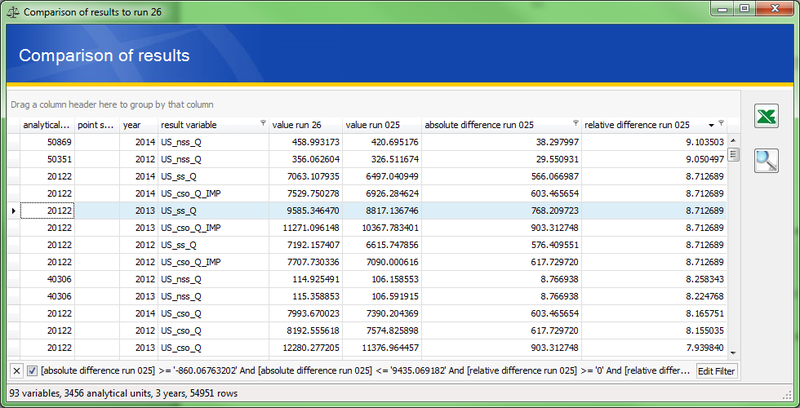

The difference of calculated values for each variable and analytical unit is given in both absolute values and percentages within a table. By filtering and sorting the columns, the desired analytical units, years and variables can be examined more closely. The table can be exported to Excel using the corresponding button on the right side.

Advantages of the variant calculations

The use of the variant manager offers several advantages:

- individual input data sets can be linked to variant combinations and the effects of different variants of input data can be examined together

- different calculation approaches for a parameter can be represented and modeled

- different variants of input data and calculation approaches can be coupled and modeled in consideration of measures

- results of calculation runs of different variants of input data and calculation approaches can be compared

Representation of measures in emission modeling

Concept of measure calculations

Measures can be realized either via variants of input data or via adjustments of calculation approaches, so that these represent an undertaken measure.

In the simplest case – when a measure represents a variant of input data, which were created externally from MoRE – the measure calculation is a variant calculation. In this case, it is sufficient to declare the variant of input data as a measure within the measure manager. Existing formulas do not need to be changed for this.

Another possibility is to use empirical approaches to represent measures. This requires that the existing basic variants of formulas have to be adjusted or extended in such a way that they are valid for modeling without as well as with measures. This can be principally achieved in two different ways:

- The basic variant of a formula can be adapted, so that the formula can be used in both cases, with and without a measure. This works in case the measure can be implemented in the formula. For the basic variant and the measure variant the same formula is used. The measure variant is then calculated using variants of input data for the variables, by which the formula was extended.

- In case the basic variant of a formula cannot be used to represent the measure, a switch variable can be implemented. By using an if-then-query, the switch variable defines when the basic variant or the measure variant is calculated. In the measure manager it has to be defined for which variant of the switch variable the measure is applied.

The measures can be implemented at different emission pathways in the model. Additionally, measures can be combined to measure combinations.

Example of a change in approaches

To demonstrate the ability of empirical approaches to represent measures, two examples will be introduced. In the first example, it is shown how a basic variant of a formula can be adapted. In the second example, an extension of the basic formula variant is not sufficient. Thus, a switch variable is inserted.

Extending a basic variant of a formula (without switch variable)

In the first example the implementation of the measure “disconnection (reduction of impervious surfaces)” in agglomeration areas in separate sewer systems is shown. In the basic variant, the area of impervious surfaces in agglomeration areas connected to separate sewer systems (US_ss_A_IMP_aggl) is calculated as follows:

whereby:

| US_ss_A_IMP_aggl | Area of impervious surfaces in agglomeration areas connected to separate sewer systems [km²] |

| US_A_IMP_aggl | Area of impervious surfaces in agglomeration areas [km²] |

| US_SHR_l_ss_tss | Percentage share of separate sewer system [%] |

| US_SHR_inh_conWWTP_tot | Percentage of inhabitants connected to waste water treatment plants [%] |

For this measure, the impervious area should be reduced by a certain proportion. This can be directly implemented within the formula. Thus, the Variable US_ss_SHR_a_uncpl_imp_AGGL is inserted. This variable represents the percentage share, by which the impervious surface is reduced:

whereby:

| US_ss_SHR_a_uncpl_imp_AGGL | percentage of impervious surfaces in agglomeration areas which can be disconnected in separate sewer system [%] |

For the newly inserted variable, two variants of values have to be present:

- In the basic variant, the value is 0 for all analytical units (without measure)

- For realization of the measure, the variable has a value x for each analytical unit (with measure)

Changing the basic variant of a formula (using a switch variable)

In case, that scenarios without and with measure require different formulas, it can be defined which formula is used for the calculation by using a switch variable.

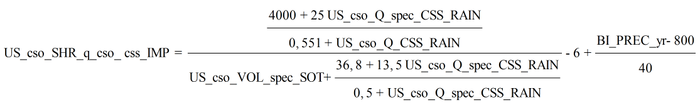

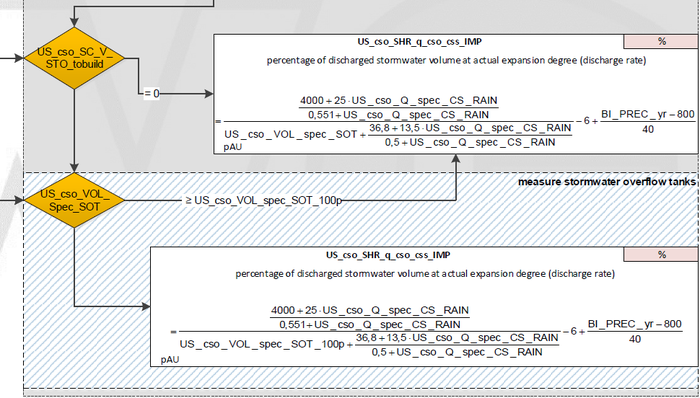

This will be explained using the measure “construction of storage volume of storm water overflow tanks in combined sewer systems”. The basic formula of the discharge rate is:

whereby:

| US_cso_SHR_q_cso_css_IMP | Percentage of discharged stormwater volume at actual expansion degree (discharge rate) [%] |

| US_cso_Q_spec_CS_RAIN | Runoff rate rain in combined sewer [L/(ha*s)] |

| US_cso_VOL_spec_SOT | Storage volume of stormwater overflow tanks in combined sewer systems, area-specific [m³/ha] |

| BI_PREC_yr | Yearly precipitation [mm/a] |

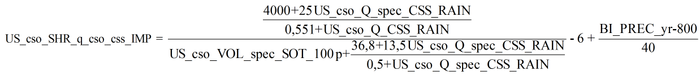

The measure compares the actual specific storage volume with a needed volume on the level of analytical units. For example, the needed volume is 23.3 m³/ha. If the specific volume is equal or larger than the needed one, no further storage volume is required. In this case, the basic variant of the formula is kept. If the specific volume is smaller than 23.3 m³/ha, the storage volume increases to this value and is used for the calculation. In the latter case, the formula needs to be adapted. In this example the variable US_cso_VOL_spec_SOT_100p instead of US_cso_VOL_spec_SOT is used within the formula:

Whether the calculation should be done without or with a measure has to be defined via a switch variable (in this case US_cso_SC_V_STO_tobuild). The internal specification of MoRE intends that the switch variable gets the value of 0 for the variant without the measure. In case the measure is considered for the modeling, the switch variable gets the value of 1. This assignment can be applied for the corresponding measures within the measure manager.

The entire formula looks like this:

Steps necessary for measure modeling

The following steps are needed when modeling measures:

- Creating the needed variables in its basic variant and, if needed, in the measure variant.

- The required formulas need to be adapted or extended by the corresponding variables to represent the measure.

- If it is necessary for the implementation of the measure, the appropriate input data of the variables for the basic variant and the measure variant need to be imported into MoRE. These import data then determine the implementation of the measure.

In the object table modeling > calculation > measure manager, the assignment of the variables and measures takes place in the following sequence:

- Definition of measure variables

- Definition of single measures

- Assignment of measure variables to single measures

- Definition of measure combinations

- Linking of single measures to measure combinations

The results of the calculation runs with different measures can be compared to one another after the modeling.

Aggregation of emissions on the basis of planning units

Next to analytical units and point sources, planning units are implemented in MoRE as additional spatial modeling units. In the object table modeling > spatial modeling units > planning units, the allocation of analytical units to planning units is documented.

The allocation of the analytical units (and parts of analytical units) to the planning units can be managed within the structure window. Thus, every user can edit, create or delete the higher-level spatial units.

MoRE primarily models on the level of analytical units. Hence, after modeling, the results have to be copied to the object table modeling > results > final. A special function was implemented here (TOOLS → PLANNING UNITS → CALCULATE RESULTS), which aggregates the results of the analytical units to the level of the planning units (depending on emission pathway and assigned factors).

The aggregated results are then integrated in the result data set from which they were created.

Groundwater transfer

The possibility of a groundwater transfer was integrated in MoRE to reflect the actual groundwater flow (and the related substance emissions), which can differ from the surface flow represented by the runoff routing. Analytical units in MoRE are surface catchment areas. The aggregation of the modeled emissions is performed along the runoff routing which describes how the modeled area is drained. Because the subsurface dewatering can deviate from the processes on the surface, the tool of the groundwater transfer was integrated into the system. With this tool, it is possible to transfer the groundwater derived emissions of an analytical unit to another one. For example, a part of the groundwater emissions of an analytical unit which discharges on the surface into the River Neckar (Rhine catchment) can be transferred to the Danube catchment.

Integration of metadata and input data

In order to perform a transfer, it must be defined from which to which analytical unit the transfer takes place, for which variables the transfer applies and which proportion of the total value has to be transferred. These definitions are currently made manually in the object table modeling > calculation > ground water transfer.

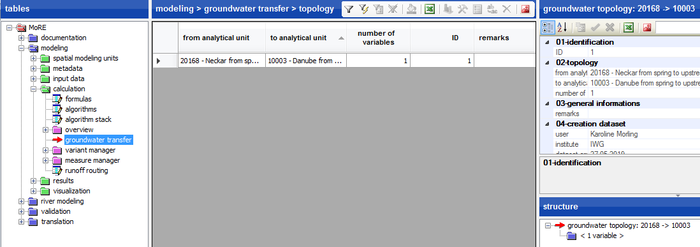

As first step, a new data set is created which defines from which analytical unit to which other one the transfer takes place. For each of these data sets, the user can then define via the structure window for which variables the transfer is valid and which proportion of the value should be transferred. For instance, in the data grid of the object table groundwater transfer, a transfer from analytical unit 20168 to 10003 is given.

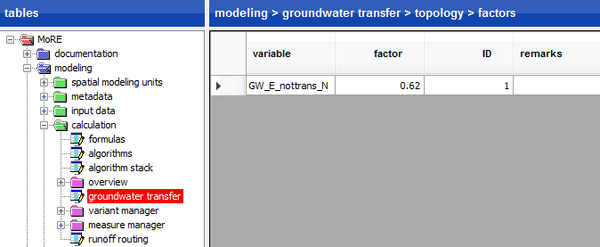

Via the structure window, a list of variables can be displayed in the data grid, showing for which the transfer from analytical unit 20168 to 10003 takes place. For each of the listed variables, the proportion, which is transferred from one to another analytical unit, is documented. In this example, 62% of the calculated value for the analytical unit 20168 is transferred to the analytical unit 10003 and added to its value.

Calculations with ground water transfer

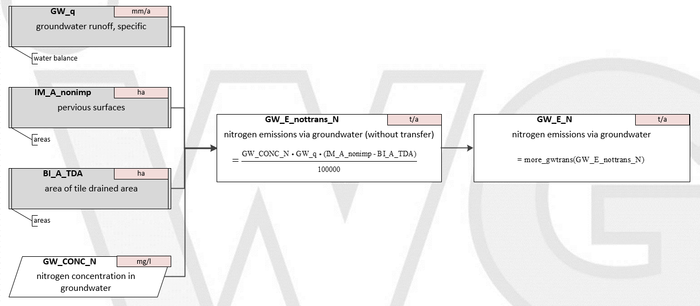

All calculation steps in MoRE are executed in formulas. Thus, for the groundwater transfer two variables need to be defined for the transfer: One variable that describes the value before the transfer and one that represents the value after the transfer. It is recommended to use the same variable name and to add the suffix “nottrans” to the variable for which the transfer has not been included yet.

The variable for which the transfer is considered is calculated using the function more_gwtrans().

For example: GW_E_N = more_gwtrans(GW_E_nottrans_N), whereby GW_E_N describes the nitrogen emissions via groundwater under consideration of the transfer, while GW_E_nottrans_N does not consider the transfer.

By implementing a formula with groundwater transfer in a calculation run, its sequence is interrupted. The software executes all calculation steps until the transfer at first, then executes the transfer for the defined analytical units and writes these values into the buffer memory. After that, the calculation is run again from the beginning and the values of the transfer are inserted from the memory when reaching the transfer. For this reason, the computing time for the algorithm stack is extended.

Validation of results with observed river loads

We are currently working on this part.

Documentation module

An extensive concept for the documentation of primary data set and input data was developed in MoRE in order to maintain high transparency about the origin of the data. Primary data are data sets from external sources which may be protected by third-party rights. Primary data comprise especially point source and area specific data as well as statistical data and monitoring data. The input data in MoRE are operable parameters, which are used for the modeling. They are derived from the primary data and filed in MoRE usually with reference to analytical units or point sources. For the creation of an input data set, several primary data set sets can be used. The implementation of information about the primary and input data sets is not obligatory, but recommended due to higher model transparency. For the management of the metadata of primary and input data sets, new object tables were created in MoRE. Their most important attributes are described in the following sections.

Object table primary data sets

For the documentation of primary data sets, the following categories are used:

- Description of data set: information like the full name of the data set, the unit and the substance reference is stored here.

- Data origin: specifications about the reference, type and date of the data acquisition as well as the methods and the date of creating the data set are made here.

- Data format: the primary data set are specified in this part. Important information like data format, georeferenced system, data type, scale as well as spatial and temporal resolution are documented.

- Scope: the temporal and spatial applicability of the primary data set is specified. This can be different from the actual usage in the modeling, which could be extended by extrapolation.

- Right of use: specifications about the data usage.

- Utilization: it is stated here, in which module the primary data set is used, which component refers to this data and which emission pathways use input data derived from this primary data set.

- Comments

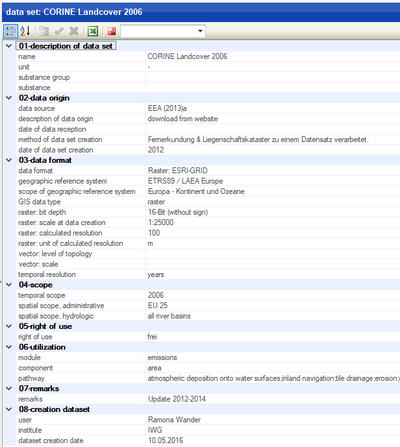

The figure below exemplarily shows the specifications of the metadata of the land use data set CORINE Landcover 2006 of the European Environment Agency (EEA 2012).

While some of the fields can directly be filled with text, others are selection fields which offer only content pre-defined in other tables. There are single and multiple selection fields whose contents can be pre-defined under documentation > selection fields as well as fields referring to other object tables. The follwing table gives an overview about the selection field types and their references.

| Field name | Free text field | Selection field | Reference to table | Comments | ||

| single | multiple | |||||

| Name | X | |||||

| Unit | X | Documentation > selection fields | ||||

| Substance group | X | Documentation > selection fields | ||||

| Substance | X | Modeling > metadata > substances | ||||

| Data source | X | Documentation > references | ||||

| Description data origin | X | Documentation > selection fields | ||||

| Date of data acquisition | Typ: Date | |||||

| Method data set creation | X | |||||

| Date data set creation | X | |||||

| Data format | X | Documentation > selection fields | ||||

| Georeferenced system | X | Documentation > geographic reference system | ||||

| Extent of validity georeferenced system | Documentation > geographic reference system | Read only | ||||

| GIS-data type | X | Documentation > selection fields | ||||

| Raster | Bit depth | X | ||||

| Scale at data set creation | X | |||||

| Computational resolution | Type: Double | |||||

| Unit of computational resolution | X | Documentation > selection fields | ||||

| Vector | Level of topology | X | Documentation > selection fields | |||

| Scale | X | Documentation > selection fields | ||||

| Temporal resolution | X | Documentation > selection fields | ||||

| Period of validity | X | Format: 2008-2010, 2012, 2014 | ||||

| Spatial area of validity | administrative | X | ||||

| hydrologically | X | |||||

| Right of use | X | |||||

| Module | X | |||||

| Parameter | X | |||||

| Emission pathway | X | |||||

| Comments | X | |||||

The metadata entries created in the object table documentation > primary data sets can be assigned to the input data sets in the object table documentation > input data sets.

Object table input data sets

Within the object table documentation > input data sets, the metadata of the input data sets are collected and it is defined, from which primary data sets they have been derived. The entries of this table are referred to by the attribute “data origin” for the input data. If input data are imported with an entry in the “data origin” field that cannot be assigned to any of the existing entries of the table, a new entry will be created automatically. This can be complemented with additional information afterwards. For the documentation of input data, the following categories are used:

- Description: Name, description and unit are filed here.

- Data origin: the primary data set sets used for deriving the input data sets are documented here. Furthermore, the method for deriving the input data set from the primary data set as well as the date of the input data creation and the editing user are documented here.

- Scope: the spatial and temporal validity of the primary data set is specified.

- Remarks

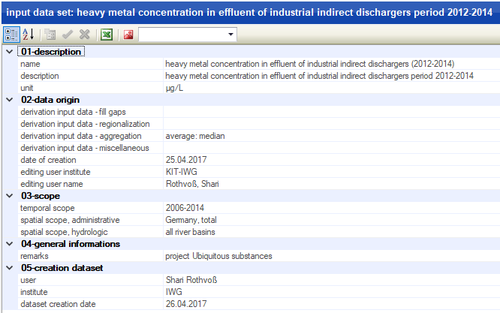

Additionally, files with supplementary information, e.g. an extensive documentation of the data preprocessing can be attached via the document management. The figure below shows an example of metadata of a project specific input data set of heavy metal concentration in effluent of industrial dischargers.

For every input data set, there is an assignment to primary data sets through the structure window. An arbitrary number of primary data sets used for the creation of input data can be chosen. At this point, also the methods used on the primary data sets to derive the input data set can be specified.